Sprint Review: the complete 2026 guide to running high-impact Scrum sprint reviews that drive actionable feedback and Product Backlog decisions

A Sprint Review is the Scrum event designed to inspect the Increment produced during the Sprint and adapt what to do next based on real evidence, stakeholder input, and changes in the environment. Teams often reduce it to a demo, yet the highest value comes from turning a working product slice into a structured product conversation that informs prioritization, scope trade-offs, and risk management. When you run the Sprint Review well, you align stakeholders around the Product Goal, clarify what “value” means now, and update the Product Backlog with sharper, more decision-ready items. In 2026, teams operate under tighter delivery expectations, more dependencies, and more ambiguous requirements, which makes the Sprint Review a critical decision point rather than a ceremonial meeting. A good Sprint Review builds trust through transparency and predictable cadence, while a weak one creates noise, frustration, and a backlog full of untested assumptions.

What a Sprint Review really is: definition, purpose, and tangible outcomes

A Sprint Review produces a concrete output: a clearer path forward expressed through a refined and reordered Product Backlog, plus shared understanding of what the latest Increment changes for users and the business. The event exists to connect the team’s delivery to stakeholder learning, so the product direction can adjust before small uncertainties turn into expensive rework. A successful review makes the Increment visible, invites feedback, and turns feedback into decisions or clearly framed follow-up exploration. It also provides an honest picture of progress toward the Product Goal, which helps stakeholders align expectations and investments. When this event works, it reduces surprises, surfaces constraints early, and turns product planning into a living system rather than a static promise.

What a Sprint Review is not: three common misunderstandings that destroy value

Many teams sabotage the Sprint Review by treating it as a status meeting, a gate for approval, or a polished presentation, and each mistake removes the learning loop Scrum is trying to create. A status meeting encourages justification and defensive explanations instead of curiosity, which quickly discourages meaningful stakeholder participation. Turning the review into an acceptance gate undermines the Definition of Done and shifts responsibility from clear quality standards to subjective meeting-time judgment. Making it a slide-based show disconnects stakeholders from real product behavior, so the team receives shallow opinions rather than insight grounded in actual use. A Sprint Review should feel like a working session that invites questions, tests assumptions, and ends with a clearer backlog, not like a performance that ends with applause.

Why Sprint Reviews matter for business outcomes: value, risk, and alignment

The Sprint Review is one of the fastest ways to improve product decision quality because it replaces speculation with evidence from a working Increment. Showing real behavior exposes hidden constraints, design issues, and integration gaps that rarely appear in documents or roadmaps. It also shortens decision cycles: stakeholders can react while context is fresh, and the team can turn that reaction into backlog adaptations before committing to the next Sprint’s work. Over time, consistent Sprint Reviews create a trust loop, where stakeholders see transparency and the team gains credible support for trade-offs and sequencing. In 2026, when products rely on complex ecosystems and distributed delivery, the Sprint Review becomes a lightweight governance mechanism that keeps decisions close to reality.

The mindset shift that changes everything: from “shipping more” to “learning faster”

A high-performing Sprint Review optimizes learning, not volume, and that single shift changes how you choose what to show and what to discuss. Instead of trying to demonstrate every completed ticket, you focus on the Increment areas that carry the most uncertainty, the highest user impact, or the greatest business risk. You design the session to validate assumptions, test workflows, and discover whether stakeholders still agree on what “value” means right now. This approach prevents the “Sprint accounting” anti-pattern where exhaustive demos exhaust the audience and dilute the few insights that actually matter. When learning becomes the priority, the backlog naturally becomes sharper, because items must justify their existence through measurable outcomes, risk reduction, or strategic fit.

Who should attend: roles, responsibilities, and stakeholder selection

The right attendance determines whether a Sprint Review produces useful feedback or polite silence, because feedback quality depends on decision authority and domain relevance. The Scrum Team must be present, including the Product Owner, the Developers, and the Scrum Master, because each brings essential perspectives on value, feasibility, and process health. Stakeholders should represent actual value creation and constraints, such as customer-facing teams, operations, compliance, security, finance, or strategic leaders who influence prioritization. Inviting everyone “just in case” often backfires, because oversized meetings reduce participation and amplify off-topic discussion. A better approach is to invite a focused group that can contribute insight, validate assumptions, and help the team make trade-offs that move the product forward.

Who presents what: a practical model that keeps the review crisp and credible

You get the strongest results when you deliberately assign presentation responsibilities instead of improvising, because role clarity prevents the meeting from becoming a monologue. The Product Owner should anchor the context by restating the Sprint Goal and connecting the Increment to the Product Goal and current priorities. The Developers should demonstrate the Increment in real product flows, because they can explain behavior, limitations, and trade-offs with precision and credibility. The Scrum Master should facilitate the conversation, manage time, and protect psychological safety so stakeholders ask honest questions and the team hears them as learning signals rather than criticism. This shared approach keeps the review balanced: value framing, evidence demonstration, and structured discussion that ends with backlog adaptation.

Timeboxing and duration: how long should a Sprint Review be in 2026?

Timeboxing protects the purpose of the Sprint Review by forcing prioritization of the conversation, and it also respects stakeholder attention in busy organizations. Scrum provides a clear quantitative reference: the Sprint Review is timeboxed to a maximum of 4 hours for a one-month Sprint, with proportional adjustment for shorter Sprints. That number is not a target to hit, but a guardrail that reveals problems when you regularly exceed it, such as poor preparation, overly detailed demos, or uncontrolled discussion. A well-run review typically balances demonstration and conversation, leaving enough room for questions, trade-off exploration, and alignment on next steps. In practice, the best indicator of an appropriate duration is whether you can update the backlog and clarify decisions without rushing or dragging.

Invitation cadence: how consistent scheduling increases stakeholder engagement

Consistency increases attendance because stakeholders can plan around the Sprint Review as a reliable decision point, not a last-minute interruption. You strengthen this effect by keeping the time and day stable, publishing the agenda early, and making it obvious what kind of input you need from stakeholders. In hybrid and remote settings, this reliability is even more important because fragmented schedules and meeting fatigue quickly reduce voluntary participation. A stable cadence also creates a rhythm of transparency, where stakeholders learn that the product evolves in increments and that their feedback leads to visible change in the Product Backlog. When the Sprint Review becomes predictable, it functions like a lightweight product steering mechanism, reducing the need for separate governance meetings that often duplicate discussion without real evidence.

Preparation: the phase that determines most of Sprint Review quality

Preparation is where most Sprint Review outcomes are decided, because the meeting cannot compensate for missing clarity, unstable demos, or undefined decisions. Start by ensuring the Increment meets the Definition of Done, since nothing erodes stakeholder confidence faster than a review built on “almost finished” work. Next, choose what you want to learn: identify the riskiest assumptions, the biggest value drivers, and the areas where stakeholder input can unlock a better decision. Then craft a narrative that connects the Increment to real user journeys, because stakeholders respond to impact and workflow more than to a list of completed stories. Finally, decide how you will capture feedback and translate it into backlog actions, otherwise the review becomes entertainment rather than a decision-making engine.

A repeatable operational checklist that prevents common failures

A checklist reduces cognitive load and makes Sprint Reviews reliable even when teams change, products grow, or stakeholder groups rotate. Before the meeting, confirm what is “Done,” verify access to the environment, and test the demo path end-to-end so you do not spend precious minutes troubleshooting. Define the decision points you want to reach, such as whether to prioritize an enhancement, schedule a technical investment, or adjust scope based on new constraints. Prepare three open-ended questions designed to elicit useful input, such as “What would prevent real use?” or “What risk do you see in deploying this?” Ensure you have a visible mechanism to capture input and convert it into backlog items or follow-up exploration, because feedback that disappears destroys stakeholder motivation to contribute next time.

- Increment readiness: confirm Definition of Done, stability, access, and demo environment.

- Selection: choose items that maximize learning, value validation, or risk reduction.

- User-flow script: demonstrate impact through realistic scenarios, not ticket lists.

- Decision targets: define what must be decided now versus what must be explored.

- Feedback capture: record themes, owners, and the resulting Product Backlog changes.

A high-impact Sprint Review agenda: structure that drives feedback and decisions

An effective Sprint Review agenda creates momentum without becoming rigid, and it keeps the session anchored to learning and adaptation. Start by welcoming participants and restating the Sprint Goal and Product Goal context so everyone shares the same frame. Then clearly state what will be demonstrated and what will not, because this protects time and prevents the meeting from spiraling into unrelated requests. Demonstrate the Increment using real workflows, focusing on the moments that reveal value, usability, constraints, and integration behavior. Reserve explicit time for discussion and feedback, and guide the conversation toward “what changes now” and “what should we do next.” Close by summarizing decisions and showing how the Product Backlog will be adjusted, because visible adaptation is the signal that the review mattered.

Facilitation script: questions that turn a demo into a product conversation

The questions you ask shape the quality of feedback, and a Sprint Review without well-crafted prompts often produces generic opinions that cannot guide prioritization. Ask stakeholders to describe the outcome they want, the risk they fear, or the workflow friction they anticipate, because these forms of feedback are easier to translate into backlog actions. Encourage alignment by asking how the Increment supports the Product Goal and what trade-offs stakeholders accept to reach it. Avoid forcing immediate answers to complex questions like release timing or large commitments when critical information is missing, because rushed decisions increase rework. Use facilitation to separate ideas into “decide now,” “explore,” and “park,” which preserves meeting energy while keeping the backlog adaptable and grounded in real insight.

Turning feedback into a stronger Product Backlog: practical methods that prevent “review without follow-through”

Feedback only creates value when it changes decisions, and that requires translating comments into backlog actions with clear intent and measurable outcomes. Start by sorting input into three buckets: confirmation signals that reinforce direction, corrective signals that demand near-term changes, and exploratory signals that indicate opportunities or uncertainties. Convert feedback into the right kind of backlog element, because not everything should become a user story; some items should become hypotheses, spikes, usability tests, or non-functional requirements. Attach success criteria or evaluation signals to each item so the next Sprint can verify whether the adaptation worked. End the review by showing the updated Product Backlog or at least summarizing the changes, because stakeholders contribute more responsibly when they see that their feedback leads to visible, thoughtful action.

Decide live or decide later: a simple decision framework that avoids chaos

A strong Sprint Review balances decisiveness with discipline, because not all decisions belong in the meeting. Decide during the review when the decision is reversible, the right people are present, and the information is sufficient, such as swapping two backlog items or clarifying a constraint that unblocks work. Defer decisions when they are high-impact and irreversible, or when they require additional data, such as legal review, security assessment, deeper technical analysis, or broader customer validation. This approach prevents the team from making commitments under social pressure and then retracting them, which damages trust. Capture deferred decisions as explicit follow-ups with owners and due dates, so stakeholders see that deferral is a responsible choice rather than avoidance, and the Product Backlog remains a truthful reflection of what will happen next.

Best practices for 2026: making Sprint Reviews engaging, actionable, and decision-oriented

In 2026, the best Sprint Reviews share a few traits: they are hands-on, they visualize progress, and they end with visible adaptation. Whenever possible, let stakeholders interact with the product rather than watching a screen share of clicks, because real interaction reveals friction and misunderstanding that conversation alone can miss. Visualize progress toward the Product Goal using simple indicators that stakeholders can interpret, so the Increment feels like a step on a path rather than a pile of features. Encourage feedback framed as problems, outcomes, and risks rather than prescribed solutions, because solution-level feedback often conflicts and becomes political. Protect the session from drift by using a parking lot for off-topic issues and by returning to decision targets, so the meeting produces backlog changes instead of vague agreement.

Using AI without degrading feedback quality: a realistic advantage in 2026

AI-assisted workflows can strengthen Sprint Reviews when you use them for synthesis and pattern detection rather than for decision-making. A widely cited 2026 benchmark from the AI4Agile Practitioners Report indicates that 67% of organizations already provide access to AI tools, which means many teams can standardize review preparation and follow-up using assisted summaries and thematic clustering. You can use AI to consolidate notes, group feedback into themes, propose backlog item wording, and generate follow-up questions tailored to stakeholder roles, which reduces administrative overhead. The risk is that automated summaries can flatten nuance and turn sharp signals into generic statements that do not lead to action. Treat AI as a drafting assistant, then validate each synthesized point against real stakeholder intent and ensure each item has an owner, an outcome, and a link to the Product Backlog.

Anti-patterns that kill Sprint Review value: symptoms, causes, and fixes

Most failing Sprint Reviews share recurring anti-patterns that sever the link between the Increment, learning, and backlog adaptation. “Death by PowerPoint” replaces evidence with narration, so stakeholders comment on a story rather than on product reality, and the feedback becomes speculative. Turning the review into a release gate creates fear, defensiveness, and negotiation, undermining both transparency and the Definition of Done as the true quality contract. Exhaustive demos drain attention, so the few high-value topics receive little discussion and the meeting ends without clear decisions. Fixes start with tighter selection, scenario-based demonstrations, and explicit decision targets that make the review an active working session. When you correct these patterns, stakeholder participation often improves immediately because the session becomes shorter, sharper, and more obviously useful.

A fast diagnostic you can run in real time during a struggling review

You can diagnose a broken Sprint Review by listening for patterns in participant behavior, because meeting dynamics reveal underlying purpose and trust issues. If stakeholders stop attending, the root cause is usually low perceived value, which often comes from long demos with little discussion or from feedback that never leads to visible backlog changes. If the team becomes defensive, the review has likely become a judgment forum, and you need to reframe it around inspection and adaptation using neutral, outcome-based questions. If stakeholders flood the meeting with requests, you likely lack shared prioritization criteria, so you must reconnect each idea to the Product Goal and clearly distinguish between “capture” and “commit.” The fastest improvement is to tighten the agenda, show fewer but more meaningful increments, and end with a clear summary of decisions and follow-ups that demonstrates real adaptation.

Sprint Review vs Sprint Retrospective: clean separation that improves both events

Mixing Sprint Review and Sprint Retrospective creates hybrid meetings that satisfy neither purpose, because product feedback and process improvement require different audiences and psychological conditions. The Sprint Review focuses on the product Increment, stakeholder input, environmental change, and adaptation of the Product Backlog, and it benefits from open participation of external stakeholders. The retrospective focuses on how the Scrum Team works, what is slowing it down, and what experiments can improve delivery and collaboration, which often requires a more private and candid space. When teams blend these events, they either lose stakeholder learning or lose team safety, and both outcomes degrade performance. Keep the events distinct: use the review to steer product direction through evidence and feedback, and use the retrospective to raise the team’s capacity to deliver a high-quality Increment consistently.

Measuring Sprint Review effectiveness: a simple scorecard that drives continuous improvement

Measuring Sprint Review effectiveness does not mean adding bureaucracy; it means verifying that the meeting produces adaptation and better decisions. Track whether key stakeholders attend consistently, because absenteeism often signals poor value perception or unclear decision relevance. Evaluate feedback usefulness by checking how often feedback becomes backlog changes, and whether those changes include clear outcomes or validation plans. Observe the balance of time spent demonstrating versus discussing, because a review with no discussion is usually a demo, not a working session. Watch how often the Product Backlog changes after the review, because a backlog that never adapts suggests either low learning or low willingness to act on learning. These indicators turn the review into an improvable product system rather than a fixed ritual, and they help you diagnose problems early.

A five-criteria scorecard that fits on mobile and stays actionable

A scorecard works best when it is short, consistent, and tied to behaviors that teams can actually change. Rate the clarity of the Sprint Goal and Product Goal framing at the start, because poor framing produces unfocused feedback. Rate the realism and impact of the demonstration, because scenario-driven demos produce better insight than ticket walkthroughs. Rate the quality of stakeholder feedback by checking whether it includes outcomes, risks, and constraints rather than vague preferences. Rate decision clarity by confirming whether the group ended with a small set of decisions, experiments, or follow-up analyses, and whether owners were assigned. Finally, rate visible Product Backlog adaptation, because a review that ends without changes rarely improves product direction, even if the conversation felt lively.

Context-specific Sprint Reviews: adapting the event for B2B, complex products, multi-team delivery, and remote work

Effective Sprint Reviews keep Scrum principles intact while adapting format to context, because product complexity and organizational structure change what stakeholders need to see. In B2B, show workflows that map to customer accounts, integrations, and operational readiness, because value depends on adoption and deployment realities. In complex or platform products, structure the review by value themes and risk hotspots, not by components, so stakeholders understand impact without getting lost in architecture. In multi-team environments, avoid the “demo parade” by defining a single narrative thread and selecting only the increments that materially change user experience or strategic goals. In remote settings, protect the event by testing tooling, providing access to environments, and creating clear channels for feedback capture, because friction and lag quickly reduce discussion. The goal remains the same: evidence, feedback, and adaptation of the Product Backlog, regardless of format.

Managing many stakeholders: preventing the review from becoming a noisy forum

When many departments attend, a Sprint Review can become a forum where everyone advocates for local priorities, which dilutes learning and leaves the team unable to adapt coherently. You prevent this by framing the session around the Product Goal and by using shared prioritization criteria such as customer impact, revenue potential, risk reduction, compliance, or operational load. Require feedback to be expressed as a problem, outcome, or constraint rather than as a demanded solution, because solution battles create politics and reduce clarity. Use asynchronous channels for detailed requests so the live meeting stays focused on the highest-value decisions and learning moments. Close with a crisp summary of what was decided, what will be explored, and what was parked, so stakeholders feel heard while the product direction remains coherent and the Product Backlog stays truthful.

Advanced facilitation techniques: turning Sprint Review energy into commitment and clarity

Advanced facilitation makes the Sprint Review feel efficient and meaningful, which increases stakeholder participation and improves decision quality over time. Start by setting a working agreement that promotes curiosity, such as “challenge assumptions, not people,” because safety drives honest feedback. Use timeboxed segments with explicit transitions so the meeting does not drift, and assign a visible feedback recorder so ideas do not vanish into chat logs. Encourage stakeholders to prioritize their top concerns in real time, which reduces the tendency to list everything and increases the chance of reaching decisions. When conflict arises, anchor discussion in outcomes and evidence from the Increment, because evidence reduces positional debate and accelerates alignment. These techniques do not add complexity; they remove friction and make the review feel like the shortest path to better product direction.

Designing the demo for insight: scenario selection, edge cases, and “show the constraints”

The most valuable Sprint Review demonstrations are designed to expose the product’s truth, not to hide its imperfections behind polished flows. Choose scenarios that represent real usage and that highlight the decision you want to inform, because stakeholders respond best when they can connect what they see to real outcomes. Include at least one edge case or constraint-driven flow when relevant, because constraints often create the biggest surprises after release and are expensive to fix late. Explicitly show what the Increment does not do yet, and explain why, because transparency prevents stakeholders from forming incorrect expectations that later become conflict. Keep the demo short enough to protect discussion time, because the discussion is where adaptation happens and where the Product Backlog becomes sharper. When you treat the demo as evidence rather than as performance, stakeholders tend to offer clearer, more actionable feedback.

Linking Sprint Reviews to Product Strategy: Product Goal, roadmap, and outcome-based planning

A Sprint Review becomes far more powerful when it reinforces a coherent product strategy, because stakeholders can then evaluate increments against direction rather than against personal preferences. Start by connecting the Increment to the Product Goal, and explicitly state what outcome you are trying to influence, such as activation, retention, operational efficiency, or risk reduction. Use the review to test whether roadmap assumptions still hold, because market changes, competitor moves, and internal constraints can invalidate earlier plans. When stakeholders see how the Increment maps to outcomes, they give feedback at the right level, such as “this won’t reduce onboarding time because step X still blocks users,” rather than “I don’t like this screen.” This strategic framing also helps the team resist scope creep, because new requests must compete against a clear goal and a visible backlog. Over time, the Sprint Review becomes a strategy execution checkpoint grounded in evidence.

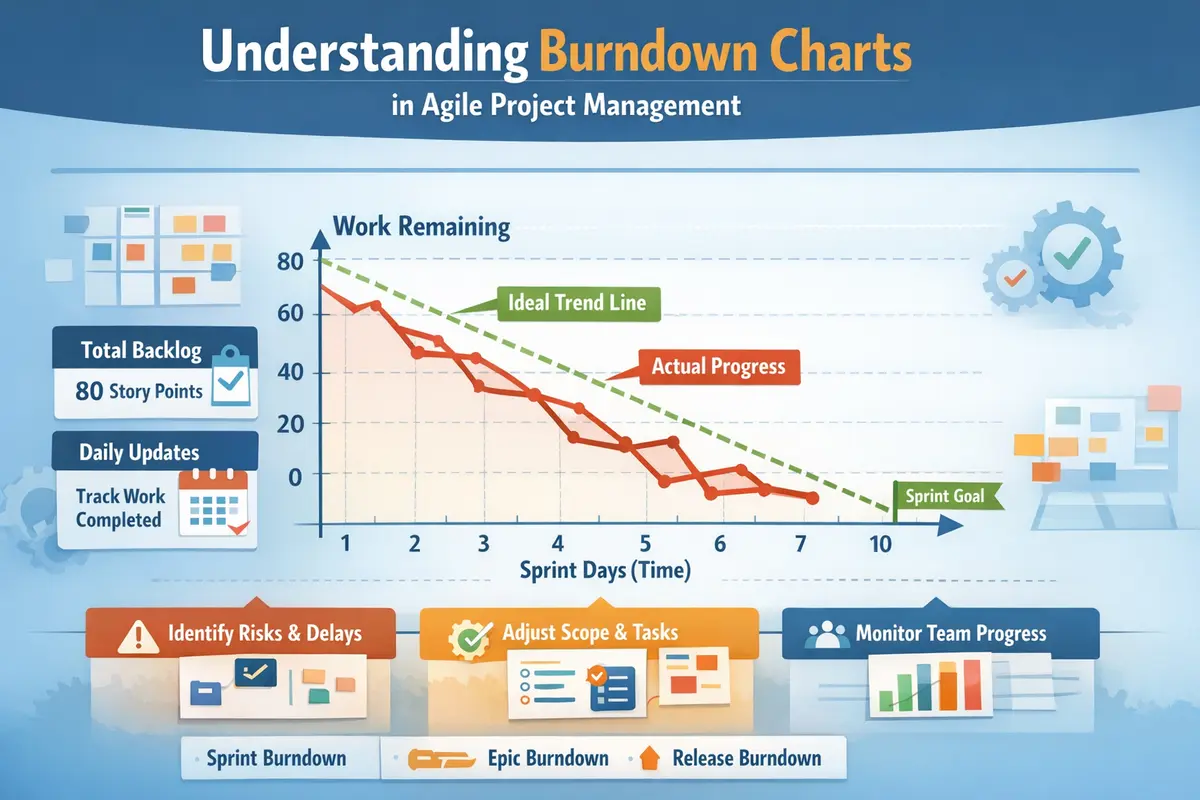

Quantifying progress without turning the review into a metrics meeting

Stakeholders often want numbers, but a Sprint Review should use metrics to clarify decisions, not to replace product conversation. Choose a small set of indicators tied to the Product Goal, and use them to frame questions like “what did we learn?” and “what should we change?” rather than to report performance for its own sake. When you introduce a metric, link it to the Increment behavior you demonstrated, because the combination of evidence and measurement improves understanding and reduces debate. Avoid drowning participants in dashboards, because too many metrics make it harder to see which action matters next. When metrics are immature, use qualitative signals like support themes, sales objections, or observed workflow friction as valid evidence for backlog adaptation. The aim is decision clarity, not metric theatre, and the review should remain a working session that updates the Product Backlog based on the best available signals.

Mini FAQ: Sprint Review answers that match common 2026 search intent

What is the difference between a Sprint Review and a sprint demo?

A sprint demo usually means “show what we built,” while a Sprint Review means “use what we built to decide what to do next.” In a demo-only format, stakeholders react to surface-level impressions and the team often collects opinions that do not translate into backlog actions. In a Sprint Review, you demonstrate the Increment as evidence, then guide a structured discussion that inspects value, risk, and alignment with the Product Goal. The outcome should be visible adaptation, typically through changes in the Product Backlog or through clearly defined experiments and follow-ups. If your meeting ends with no decisions, no backlog movement, and no shared next steps, you ran a demo, not a Sprint Review.

Who should run the Sprint Review: the Product Owner or the Scrum Master?

The most effective approach is shared responsibility aligned to roles: the Product Owner leads the value framing and decision focus, while the Scrum Master facilitates the event so it stays effective and safe. The Product Owner is best positioned to restate priorities, connect the Increment to the Product Goal, and guide backlog trade-offs based on stakeholder input. The Scrum Master protects timeboxing, keeps conversation constructive, and ensures the session remains an inspection-and-adaptation working meeting rather than a judgment forum. In some teams the Product Owner facilitates everything, but that works only if facilitation skills remain strong and stakeholder dynamics are manageable. The simplest test is outcome-based: the facilitator setup is correct if the Sprint Review consistently produces actionable feedback and Product Backlog adaptation.

Should you cancel the Sprint Review if the Sprint Goal was not met?

Canceling the Sprint Review when the Sprint Goal is not met removes transparency exactly when inspection and adaptation matter most. The review is the place to show what is truly “Done,” clarify what blocked progress, and adjust direction or expectations based on evidence rather than on assumptions. Skipping it often increases risk, because stakeholders receive less information and the team loses a structured opportunity to realign priorities and constraints. You can adapt the format by reducing demonstration time and increasing discussion about risks, dependencies, and the most valuable next steps. A mature Sprint Review treats shortfalls as learning signals that guide backlog adaptation, not as reasons to hide the Increment. The goal remains the same: inspect reality and decide how to respond.

How do you make Sprint Reviews useful for stakeholders who say they have no time?

Stakeholders usually lack perceived value, not calendar minutes, so the solution is to make the Sprint Review demonstrably useful and time-respectful. Shorten the meeting by selecting only the increments that require input or decisions, and avoid exhaustive walkthroughs that do not change direction. Publish a clear agenda with explicit decision targets so stakeholders know why their presence matters and what kind of input will be most helpful. Demonstrate through real workflows, then capture feedback visibly and show how it changes the Product Backlog, because visible adaptation reinforces the usefulness of participation. If stakeholders still resist, refine your invite list to focus on the people whose insight or authority genuinely affects prioritization and outcomes. When the review reliably produces decisions, attendance typically improves on its own.